Have you ever wondered if you can park your car in front of the house you live in? It’s a never ending story for me. Sometimes, you just need to know once you are driving home and you are not sure if you would fit. No worries, let the machine take a look for you.Of course, I was trying to do prior research but it seems nobody did a model classifying how many cars are in there. With my fairly limited knowledge of machine learning, I was trying to find a service that would do the job for me. As a GCP user, I was interested in AutoML. To validate the effort, I also created a model in Tensorflow. Whole project can be found on github.

I can already hear your remarks saying: “What if you would just install an IP webcam and take a look?” Looking at a small photo while you are driving is not a good thing to do. Furthermore, you can utilize the text-to-speach service to read the number for you once you can classify how many parking spots are empty. Isn’t that just cool?

Preparation

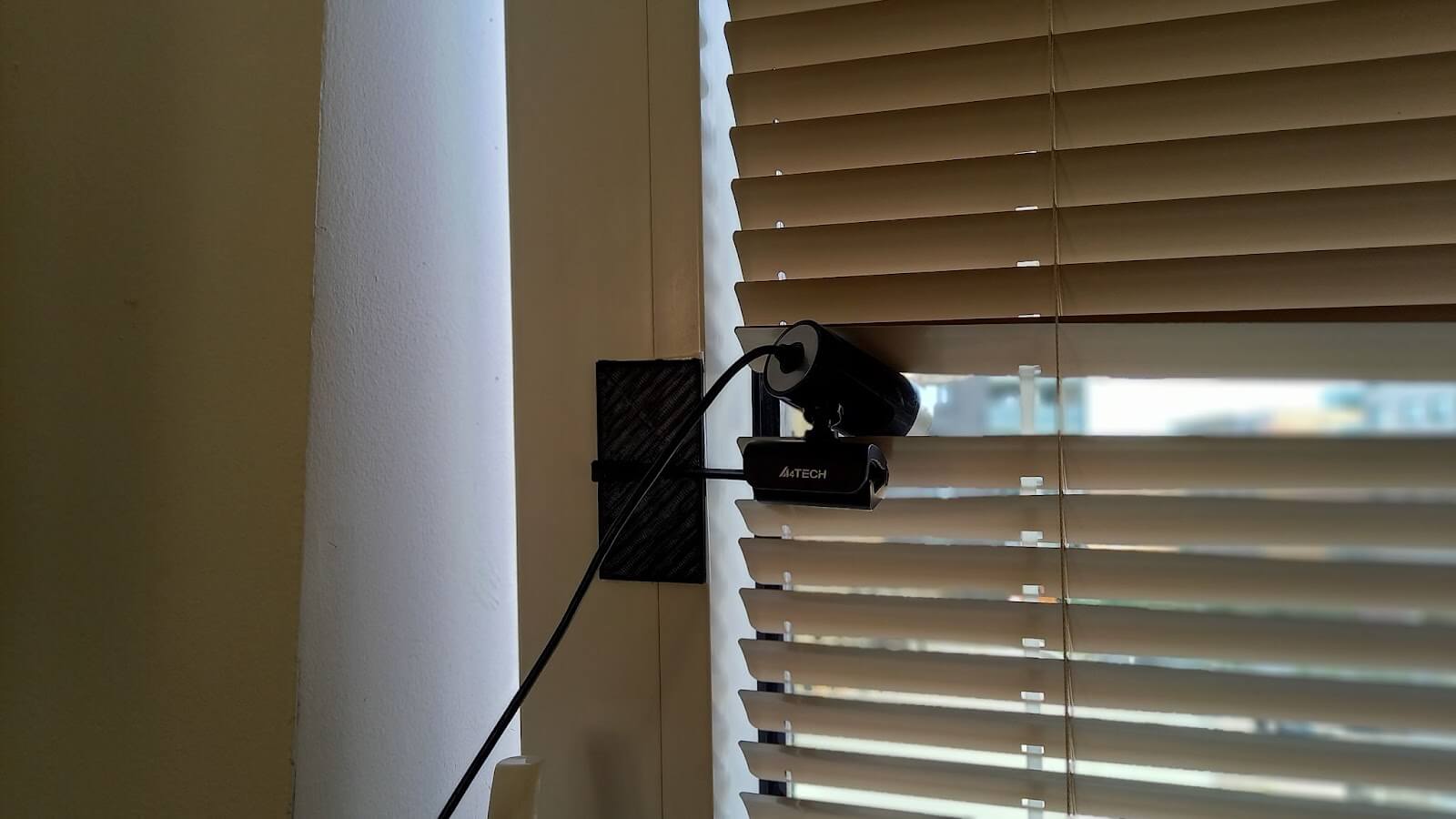

First I need to collect the pictures. I used RPi 1 because I had one free. Also, I utilized an old A4tech cam. Having this old and simple setup has some not evident advantages. It’s dirt cheap! Cheap is the theme I was aiming at. Therefore the first cam holder looked like this:

Did you notice my creative usage of crayon and zip ties? Yes, you can’t open the window easily. Yes, I have a girlfriend and she was not that fond of the project. But somehow I managed to keep the setup for a few months without her noticing. Overall, I was just tired of the situation. Everytime anyone opened the window, pictures got skewed. Therefore I created a new camera holder just for the project.

It worked. You just need to use a 3D printer and double sided tape.

To capture the picture with a USB cam, you can utilize fswebcam. I do recommend using parameter `-F 10`. It will make the picture less noisy and blurs out objects which move. It works on multiple levels. Cars once parked stay without movements for at least 10 frames, people somehow can’t stay still. Once you are struggling with GDPR, stress no more. Blurring almost always helped me to make people unrecognizable.

The whole thing was running in a crontab with a period of ten minutes. Script called by the cron looked like this:

#!/bin/bash

mount | grep “/mnt/parking_photos” >/dev/null || mount /mnt/parking_photos

fswebcam -D 2 -S 20 -F 10 -r 1920x1080 --no-banner `date +“/mnt/parking_fotos/%y%m%d_%H%M%S.jpg”`As you might notice, I check each time if a mount point is present. That’s because the internal SD card of the Raspberry Pi is very small. I am trying to keep overall I/O load as low as possible, therefore saving images to the SD card is not an option.

Preprocessing

After collecting all the pictures I was facing multiple issues. First of all, my cam does not see in the dark. That limits the time I can use my classifier only to daylight time. I can live with that. To remove all the pictures which are black, I used bash script which makes each image in size of one pixel. Then it compares HSB values and once brightness is lower than 20, the picture is removed:

if [ `convert $1 -colorspace hsb -resize 1x1 txt:- | grep -o ‘hsb\(.*,.*,.*\)’ | grep -o ‘[0-9]*%)’ | grep -o ‘[0-9]*’` -gt 20 ]; then

echo $1

else

rm “$1”

fiSecond issue was how to divide all those pictures into categories. Well, I did not have any bash script for that and once I would, there would be no need for a classifier in the first place. Therefore I just did it myself.

The problem was that the groups have become uneven. The largest group was the one where all the parking spaces were filled and the smallest group was the one where there were four empty parking spots. There was not a single picture where the parking place was totally empty.

I named the groups as follow:

- 1 - There was only one spot left

- 2 - There was only two spots left

- 3 - I guess you now spotted the common theme

- 4 - This did not happened too often and I had to remove the group because there was not enough data

- i - Only the place for disabled is empty

- full - The parking is fully occupied and I have to go park the car elsewhere

AutoML

My hope for the service was that I will just give it data, the list of categories and it will work. Therefore I followed the tutorial which classifies different types of flowers. There were only a few minor changes I had made:

- The tutorial does not talk that much about how the list of data should look like. I created a script which takes all the pictures uploaded to the GCS bucket and adds to which subset of data the pictures belong to.

- I had to lower the score threshold because the model did not feel confident enough about my new testing photos.

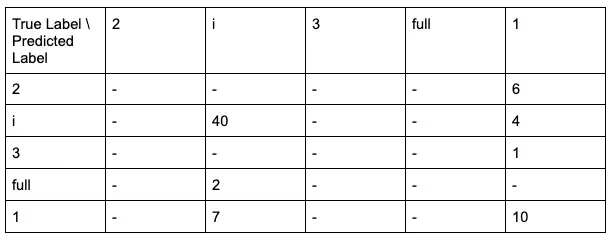

Another disappointment happened once I inspected the confusion matrix. GCP interpretation goes as follows: “This table shows how often the model classified each label correctly, and which labels were most often confused for that label.” This functionality is enabled only if you provide a subset of pictures marked as TEST.

In the case of a lot combinations (e.g. 2 true label - 2 predicted label), we can see that the model did not classify any picture from the TEST subset of our data. What’s entirely misleading is the fact that the model classified three empty parking spots as the same as one empty parking spot. It happened only for one photo, therefore it’s hard to say if that is really an issue. The same goes for two and one parking spots. That could be an issue once you are driving to the spot because you are confident there is one more free.

Overall, I have to say, I was almost satisfied with the result. Given that my data was rubbish, AutoML did its magic and it kinda worked. I tried a few new photos just to see how it could be used in the future application.

Following picture was classified as follows:

Prediction results:

Predicted class name: 2

Predicted class score: 0.44264715909957886

Predicted class name: 3

Predicted class score: 0.4311576187610626

Confidence/score was not high but classification was right. If you would be thinking about going to the parking place or not, you would have your information. Don't worry, I am aware that this is not the right way to decide if the model works well.

Tensorflow

To compare AutoML with something you can do on your computer, I tried to create the model by myself using Tensorflow. Again, due to my very limited knowledge of machine learning, take this more & less as something to consider if you would try to do a similar project with a limited set of skills (like me). Fortunately, there is a great tutorial on how to classify flowers with Tensorflow.

There are only a few differences in my implementation of the model. I used a larger layer of Conv2D nodes and enlarged input image size. I did not use data augmentation because flipping, random zooming and rotating the picture of parked cars won’t make any reasonable difference.

I also have to mention that training took 2 GB of RAM. Tensorflow decided that using my older Nvidia 1050 is not a good thing to do and trained everything on Ryzen 5950X. Somehow, I can’t blame him.

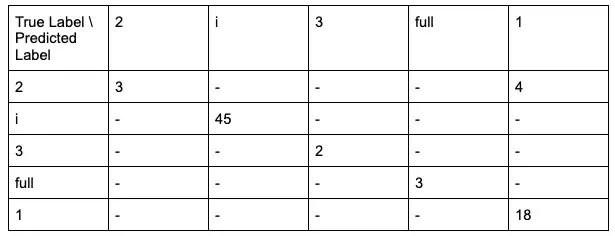

Overall performance during the training usually ended up with loss: 0.0633 and accuracy: 0.9750. Here we can see the results in confusion matrix:

These results (at least to me) seem to be much more promising than in AutoML.

Conclusion

At the end, creating the model by myself in Tensorflow worked much better. I guess we can blame input data. To be honest, I just missed the use case for AutoML in the first place. Much bigger disappointment was awaiting me in the billing. Tensorflow cost me a lot of electric power due to training on CPU, AutoML cost me 75 USD for 24 hours of training node usage.

Whole billing of AutoML was just a mystery for me. I was lucky and everything I tried was taken from the free credits but I see a very large potential to pay a lot for a service you might not need at all. You also have to pay for GCS storage. In case you provision the model as Cloud hosted, GCP provision a node just for classification, that might create additional cost.

My personal advice would be: unless you are 100% sure AutoML is the way to go, spend some time with Tensorflow. The ability to test hyper parameters by yourself can lead to better results. I am not a machine learning expert, but after going through a well written Tensorflow tutorial, I felt confident to give it a try. In the end, my results were much better than with AutoML.

What’s next

I started this post by saying I need to have something that can give me a hint if I can park in front of the house I live in or not. Having only a classifier is not enough. AutoML has a Cloud hosted option. It also provides an SDK which you can use in your client apps. For Tensorflow you would have to provision the infrastructure by yourself.